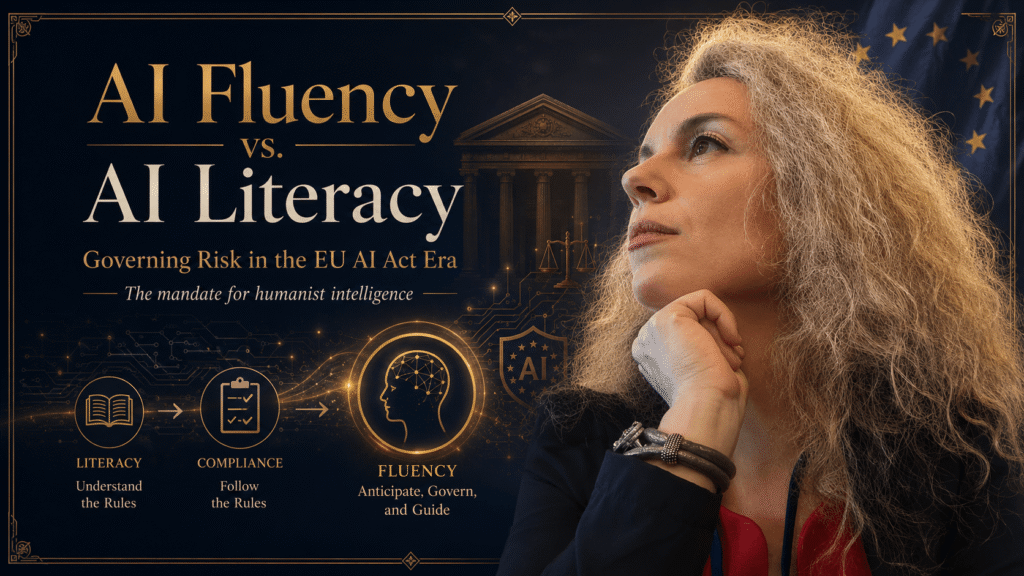

AI Fluency vs. AI Literacy: Governing Risk in the EU AI Act Era

Executive Summary: The Mandate for Humanist Intelligence

In the rapidly shifting landscape of the Intelligence Age, “AI Literacy” has moved from a competitive advantage to a baseline legal requirement. With the enforcement of the EU AI Act, leadership teams are now mandated to demonstrate a degree of “AI Literacy” to ensure ethical and safe deployment. However, for the C-Suite and Board-level executives, literacy is insufficient. To mitigate systemic risk and avoid the “Automation of Dysfunction,” leaders must achieve AI Fluency. Fluency is the ability to maintain the Human Edge—specifically the capacity for Diagnostic Thinking—to audit autonomous systems and navigate high-stakes scenarios where code alone cannot provide the answer.

1. Defining the Chasm: Literacy vs. Fluency

Most corporate training programs focus on “AI Literacy”—teaching employees how to write prompts or use generative tools. While necessary, literacy is purely operational. It is the ability to “read and write” in the new language of machines.

AI Fluency, by contrast, is strategic and forensic. It is the ability to:

- Identify Hallucinations of Logic: Not just spotting a wrong fact, but identifying when an AI’s strategic reasoning is misaligned with human-layer reality.

- Manage the Human Model Problem: Understanding that AI can optimize a system but cannot architect a vision.

- Maintain Diagnostic Thinking: Preserving the human ability to see “the ghost in the machine”—the anomalies that don’t fit the training data.

Leaders who are merely literate are at risk of Brain Rust—a cognitive atrophy that occurs when critical judgment is entirely offloaded to autonomous agents. Fluency is the antidote to this rust.

2. The Legal Catalyst: The EU AI Act and AI Literacy

The EU AI Act is the world’s first comprehensive horizontal legal framework for AI. Crucially, it places a direct responsibility on “providers” and “deployers” (which includes most corporations) to ensure their staff possesses a sufficient level of AI literacy.

For CEOs and Executives, this is no longer a “nice to have” HR initiative; it is a compliance requirement. Organizations must be able to prove that those making high-stakes decisions—especially in “High-Risk” AI applications—understand the mechanics, limitations, and ethical implications of the tools they oversee. Failing to transition from a “black-box” approach to an informed, fluent approach is now a significant legal and financial liability.

3. Learning Through Failure: The Power of Simulation

How do you teach a CEO to handle an AI-driven systemic collapse? You cannot wait for a real-world disaster to perform a post-mortem. You must perform a Pre-Mortem through simulation.

In my work developing high-stakes simulation apps for leadership, we found that leaders only achieve fluency when they are forced to navigate “Broken Scenarios.” These simulations place executives in high-pressure environments where:

- An AI-driven procurement agent triggers a trade war.

- A “Frictionless” AI HR system accidentally purges the company’s most creative talent.

- Algorithmic Aversion in the customer base leads to a sudden, inexplicable revenue drop.

By failing in a simulated environment, leaders build the “cognitive muscle” needed to verify rather than just trust. This simulation-based approach is the most effective way to comply with EU requirements while building genuine operational resilience.

4. The Three-Question Test for Humanist Scaling

To prevent People Debt™—the accumulated liability of unmanaged human friction—leaders must apply a forensic filter to every AI implementation. My Mirror Test Framework™ utilizes the “Three-Question Test” to determine where AI ends and human judgment must begin:

- What requires pattern recognition? (Automate this. AI is superior at processing vast datasets to find trends.)

- What requires reading what is NOT being said? (Protect this. High-stakes negotiation, founder alignment, and “Energetic Dissonance” require the Human Edge.)

- What happens if we are wrong? (If the cost is existential—reputational ruin, legal liability, or systemic collapse—human Diagnostic Thinking must be the final fail-safe.)

5. Avoiding “Workslop” and the Automation of Dysfunction

A literate leader sees AI as a way to increase volume. A Fluent Leader sees the danger of “Workslop”—the proliferation of low-quality, AI-generated output that lacks human intent.

Scaling a broken human process at 10x speed using AI doesn’t solve the problem; it accelerates the autopsy. AI is an amplifier. If your organization has underlying People Debt™ (founder drift, lack of trust, or siloed communication), AI will amplify that debt, leading to a “Scaling Stall.” Fluency allows a leader to pause the technical scale until the human foundation is audited and repaired.

6. Building the Fail-Safe: The Forensic Auditor Role

In the 2026–2030 strategic window, the most valuable C-Suite role will not be the one who implements the most AI, but the one who acts as the Forensic Auditor of Human Systems.

This leader uses the Mirror Test™ to:

- Listen for the Gap: Detecting when a team’s stated AI strategy doesn’t match their emotional conviction.

- Track Linguistic Patterns: Noticing when “Trust” is mentioned as a buzzword but is missing from the operational reality.

- Locate the Confidence Edge: Identifying where the team’s understanding of the AI’s “Black Box” ends and blind faith begins.

Conclusion: Beyond Artificial Harmony

The goal of AI Fluency is not to reach a state of “Artificial Harmony” where the machines and humans appear to work perfectly because no one is asking the hard questions. The goal is Systemic Realism.

As the architects of the 100-year human and the guardians of venture continuity, we must treat AI literacy as the floor and AI fluency as the ceiling. By using simulation-based learning and forensic audits, we ensure that our leadership architecture is as resilient as the technology we deploy.

Is your leadership team literate enough to follow the rules, or fluent enough to lead the change?