AI Safety: Why the Human Layer is the Ultimate Systemic Fail-Safe

Executive Summary: The Forensic Reality of AI Risk

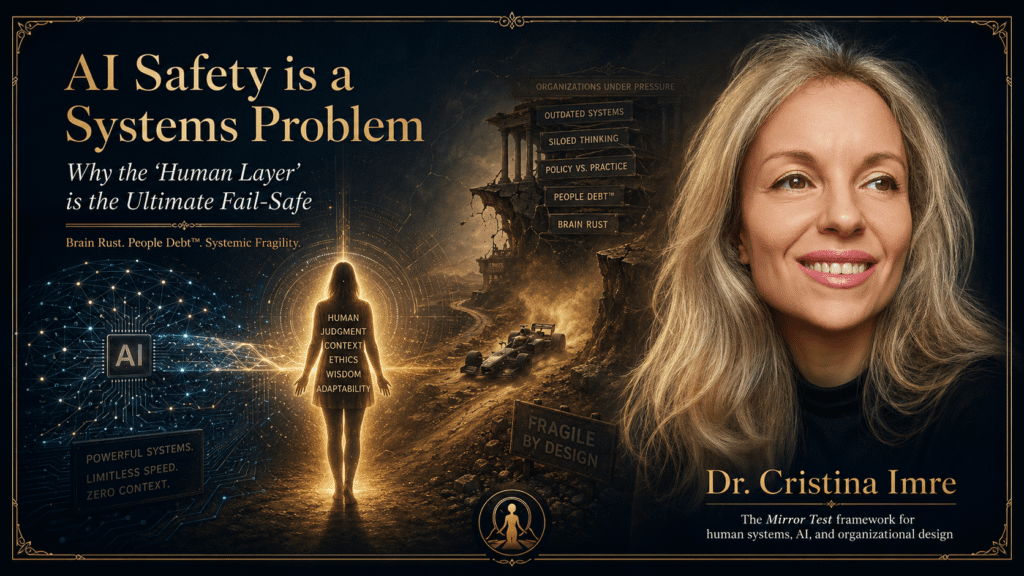

In the Intelligence Age, the most consequential risk to institutional value and global stability is not technical obsolescence or algorithmic bias; it is Systemic Fragility. While billions are invested in code alignment, organizations often ignore the human dynamics that constitute their actual operational foundation. AI acts as an amplifier rather than a fixer. If an organization scales technological wonders on top of misaligned human structures, it creates a “Scaling Stall” or total enterprise collapse. To achieve true AI safety, we must secure the human foundation through a forensic audit before technical scaling begins.

1. The Fallacy of Pure Code: Code vs. Context

The current industrial paradigm is obsessed with the rapid deployment of DeepTech solutions. However, we are operating under a fundamental mismatch. For two hundred thousand years, the human animal was designed for survival and reproduction, not long-term systemic management. We once made a “Bargain,” trading biological freedom for civilizational predictability.

Today, we are upgrading the human while forcing it to live inside a civilizational operating system built for a shorter-lived species. This creates a “Formula 1 on a dirt road” scenario: the biological and technical machines are becoming magnificent, but the organizational infrastructure is catastrophically outdated. AI safety is not just a software problem; it is a systems problem. If we focus only on the “F1 car” (the AI) and ignore the “dirt road” (the human context), the system is destined for failure.

2. Introducing “Brain Rust”: The Silent Systemic Failure

As organizations prematurely outsource critical thinking to autonomous systems, they encounter a phenomenon I call “Brain Rust”. This is the atrophy of cognitive skills and independent decision-making. Gartner predicts that by 2026, 50% of global organizations will require “AI-free” skills assessments during hiring to combat this critical-thinking decay.

Brain Rust is a top strategic risk. When teams offload judgment to AI entirely, they lose their sense of purpose and their “Human Edge”—the unique capacity for diagnostic thinking that AI cannot replace. In Dr. Imre’s advisory practice, it has been observed that total cognitive offloading can lead to a complete loss of human judgment within six months. A safe system requires humans who can see what does not fit the pattern, rather than just machines that excel at pattern matching.

3. People Debt™: The Hidden AI Risk Amplifier

AI safety is compromised by People Debt, which is the accumulated interest on unaddressed human friction, co-founder misalignment, and cultural fragility. While technical debt is visible in code, People Debt is a “Silent Saboteur” hidden in linguistic patterns and energy shifts.

- The Dysfunction Tax: Organizational misalignment leads to productivity losses of 30-40%. For a venture burning $200K monthly, this is a “tax” of $60,000-$80,000.

- The Scaling Trap: Implementing AI on a broken human foundation simply automates dysfunction, making the company “messy at 10x speed”.

- Workslop: AI proliferation can accelerate “Lazy Thinking” and the production of “Workslop”—low-quality work produced without sufficient human oversight.

Research shows that 65% of high-potential startups fail due to co-founder conflict rather than a lack of market fit. If this human friction is amplified by AI, the resulting organizational implosion is almost certain.

4. The Boeing 737 Max Warning: Diagnostic Thinking vs. Executable Logic

The Boeing 737 Max serves as a grave case analysis of systemic failure. It represents the “Frictionless Trap,” where an organization prioritizes efficiency and zero drag over systemic resilience. When technical scaling ignores the human foundation, the results are catastrophic.

Safety requires “Systemic Resilience” (Robustness) rather than mere frictionless design. Resilience acknowledges that “friction” is a feature providing traction and requires building a “Snow Tires” layer of leadership assets, including cognitive slack and redundancy. In a frictionless system, the departure of a single key human can create an immediate crisis. AI safety demands a shift toward governance as engineering, where we monitor the organizational environment as closely as the technical performance.

5. AI Fluency: The New Leadership Literacy

To protect the “Human Edge,” organizations must adopt AI Fluency. This is not merely technical skill; it is the capacity to manage the “Human Model Problem”. Patients and organizations do not just need biology or code fixed; they need perception, context, and permission.

The Three-Question Test for Humanist Scaling provides a framework for this fluency:

- What requires pattern recognition? Automate this, as AI excels here.

- What requires reading what is NOT being said? Protect this; it is the core of human judgment.

- What happens if we are wrong? If the cost is existential (e.g., strategic direction), require human judgment.

Organizations must realize that automated systems cannot replicate the “Primal Intelligence” required for complex problem-solving and ethical governance.

6. The Mirror Test Framework™: A Human Forensic Audit

The Mirror Test Framework™ (MTF) is a proprietary diagnostic designed to identify systemic friction points in just 15 minutes. It bridges the gap between behavioral science and high-stakes operations through a five-step forensic audit:

- Step 1: Listen for the Gap (Energetic Dissonance): Analyze the “moment where energy drops” to find discrepancies between words and emotional conviction.

- Step 2: Track Repetition (Linguistic Patterns): Identify “The Repeat”—words mentioned 3+ times—to reveal where hope has replaced architecture.

- Step 3: Locate the Confidence Edge: Map the psychological terrain to find where leadership confidence evaporates.

- Step 4: Name it Directly (Radical Transparency): Move from “Artificial Harmony” to naming the unsaid truth, triggering a “Sigh of Relief” and turning “ghosts” into workable problems.

- Step 5: Fix the Foundation First (Operational Moratorium): Halt technical scaling until the human foundation—trust and alignment—is repaired.

7. Systemic Longevity: The 100-Year Human Bridge

AI safety and human longevity are deeply intertwined. We are the architects of the 100-year human. This requires a new story: not staying young forever, but building capacity for the whole journey. These are not midlife crises; they are scheduled system reboots.

AI can optimize a system, but it cannot architect a life. We must stop designing for short lives and start redesigning the world for the humans we are actually becoming. Longevity medicine asks the body to build capacity, just as robust organizations must build systemic capacity to handle the shocks of the Intelligence Age.

Conclusion: Beyond Artificial Harmony

True AI safety requires us to mandate Radical Candor over Artificial Harmony. We must protect “Type 2” analytical thinking to ensure AI does not cause “Brain Rust”. By tracking psychological lagging indicators—like founder energy and linguistic repeats—boards can reliably predict future financial performance and systemic risk.

The human does not live in pieces; the human lives as one system. We must secure the “human layer” before implementing technical strategies. We are the asset protectors. By applying these forensic human standards, ventures can scale effectively without losing their Human Edge, ensuring that technology serves a higher ethical goal.

Stop repeating the old stories. Start architecting the 100-year human system.